توضیحات

ABSTRACT

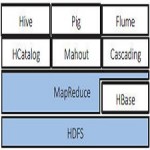

Within process mining the main goal is to support the analysis, improvement and apprehension of business processes. Numerous process mining techniques have been developed with that purpose. The majority of these techniques use conventional computation models and do not apply novel scalable and distributed techniques. In this paper we present an integrative framework connecting the process mining framework ProM with the distributed computing environment Apache Hadoop. The integration allows for the execution of MapReduce jobs on any Apache Hadoop cluster enabling practitioners and researchers to explore and develop scalable and distributed process mining approaches. Thus, the new approach enables the application of different process mining techniques to events logs of several hundreds of gigabytes

INTRODUCTION

We assume the reader to be knowledgeable with regard to the basics of process mining and refer to [1] for an in-depth overview.Nowadays, we are able to store huge quantities of event data.

In principle, an array of process mining techniques can be used to analyse these data. However, classical process mining techniques are not able to cope with huge quantities of data. Within the ProM framework3 [2] it is currently impossible to analyse event data whose size exceeds the computer’s physical memory.Within process mining only a limited amount of research has been done concerning the integration of techniques that are designed to cope with enormous amounts of data. Divide and conquer based approaches have been developed in order to reduce computational complexity

Year : 2015

Publisher : IEEE

By : Sergio Hern´andez, S.J. van Zelst, Joaqu´ın Ezpeleta, and Wil M.P. van der Aalst

File Information : English Language / 5 Page / Size : 509 K

Download : click

سال : 2015

ناشر : IEEE

کاری از : Sergio Hern´andez, S.J. van Zelst, Joaqu´ın Ezpeleta, and Wil M.P. van der Aalst

اطلاعات فایل : زبان انگلیسی / 5 صفحه /حجم : 509 K

لینک دانلود : روی همین لینک کلیک کنید

نقد و بررسیها

هنوز بررسیای ثبت نشده است.